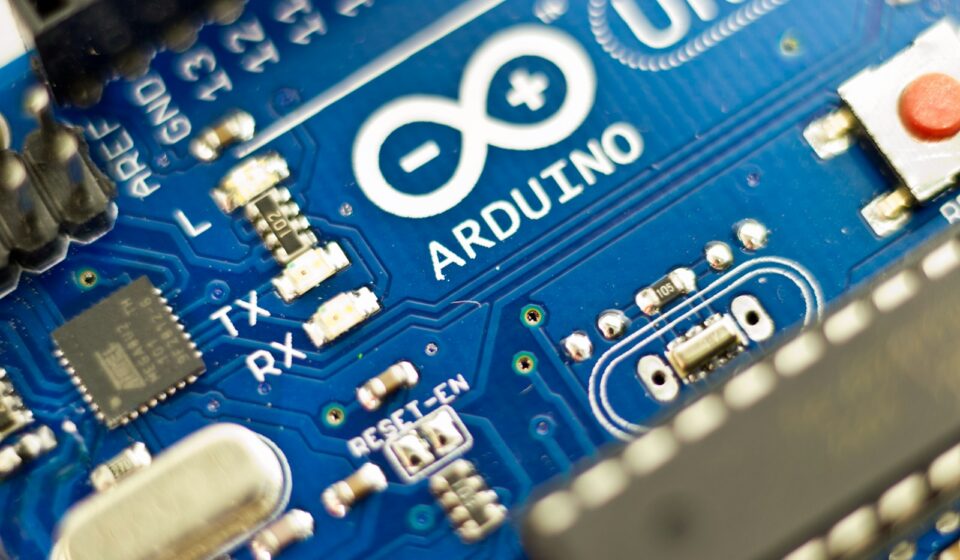

I still remember the exact acrid smell of a frying ATmega328P microcontroller. It hit my nose right around 2:00 AM on a freezing Tuesday in Chicago.

My team and I were desperately trying to cram a highly compressed, quantized machine learning model—just a basic anomaly detection algorithm for an industrial motor—onto a standard Arduino board. We had exactly 2 kilobytes of SRAM to play with. We spent days shaving off decimal points, optimizing C++ arrays, and praying the compiler wouldn’t throw an Out of Memory panic error. It failed miserably. Smoke literally poured from the breadboard when the voltage regulator gave out after we hooked up too many external sensors to compensate for the board’s lack of native processing power.

That was the harsh reality of grassroots hardware development just a few years ago. You either had the friendly, accessible blue boards that couldn’t handle serious math, or you had massive, expensive, power-hungry industrial silicon that required a PhD to program.

Then the news dropped.

Qualcomm buying Arduino. Just let that sink in for a second.

The San Diego wireless juggernaut—the company whose Snapdragon chips basically run the mobile universe—swallowing whole the scrappy, open-source darling of the maker movement. It sounds like a bizarre corporate fever dream, right? But if you strip away the initial shock and look at the raw, unfiltered trajectory of edge computing, this acquisition makes terrifyingly perfect sense.

The Day the Maker Movement Got a Neural Processing Unit

Let’s be brutally honest about where hardware development has been stuck lately. We’ve spent the last decade obsessed with the cloud. Every smart thermostat, every connected doorbell, and every factory floor sensor was built on a deeply flawed premise: collect raw data, beam it over a shaky Wi-Fi or cellular connection to a centralized server, crunch the numbers, and send a command back.

It was slow. It was expensive. It was a privacy nightmare.

Edge AI flips that script entirely. The goal is to put the brain directly on the device. You want the security camera to know it saw a human without ever talking to an Amazon Web Services bucket. But doing that requires serious local processing power—specifically, Neural Processing Units (NPUs) that can execute matrix math at lightning speed without draining a coin-cell battery in three minutes.

Qualcomm has those chips. They have billions of them.

What they didn’t have was a frictionless way to get those chips into the hands of millions of independent developers, students, and agile engineering teams. Have you ever tried to buy a single, high-end Qualcomm developer board for a weekend prototype? It’s a miserable experience. You’re usually greeted by massive enterprise distribution channels, demands for minimum order quantities of 10,000 units, and documentation locked behind heavily gated corporate portals.

Arduino is the exact opposite. They are the undisputed kings of the onboarding experience.

Silicon Reality Check: Why Qualcomm Needed the Blue Board

You can buy an Arduino at a local hobby shop, plug it into your laptop via USB-C, download their free IDE, and have an LED blinking in exactly four minutes. That kind of developer goodwill is priceless. It takes decades to build. Qualcomm looked at the math and realized they could spend billions trying to build a grassroots developer community from scratch—and probably fail—or they could just buy the biggest one on the planet.

I saw this friction firsthand back in 2021. We were consulting for a mid-sized logistics company that wanted to track vibrations on their conveyor belts to predict bearing failures before they happened. The client demanded a cheap, battery-powered solution. We initially prototyped the rig using a standard Arduino Nano 33 BLE Sense. It was a beautiful little board, but the moment we tried running a TensorFlow Lite model with any real accuracy, the inference time spiked to over 800 milliseconds per cycle. The battery died in a day.

We tried pivoting to a more powerful ARM-based industrial board. The hardware was capable, but the toolchain was an absolute nightmare. My senior embedded engineer spent three weeks just trying to get the proprietary compiler to talk to our custom sensor drivers. We missed the deadline.

This is the exact gap this acquisition closes.

Imagine the simplicity of the Arduino IDE—that friendly, uncluttered interface—backed by the raw, unadulterated computing violence of a Snapdragon NPU. You could write a few lines of C++, hit upload, and suddenly your $30 board is executing real-time computer vision at 60 frames per second while sipping milliwatts of power.

Unpacking the Hardware Marriage

So, what does the actual hardware look like when a mobile titan absorbs an educational hardware company? It completely changes how we approach physical computing.

Historically, Arduino relied heavily on 8-bit AVR microcontrollers, eventually graduating to 32-bit ARM Cortex-M chips. They are fantastic for reading temperature sensors or driving stepper motors. They are absolutely useless for running large language models or complex machine vision algorithms locally.

Qualcomm brings their Hexagon DSP (Digital Signal Processor) and sophisticated power management integrated circuits (PMICs) to the table. This isn’t just about making things faster. It’s about fundamentally altering the power-to-performance ratio.

From Blinking LEDs to Neural Networks

If you’re an engineer staring down a new project right now, you have to rethink your entire architecture. You are no longer constrained by processing bottlenecks at the edge. You are constrained by your imagination and your data quality.

Let’s look at how the specifications stack up when we project what a Qualcomm-powered Arduino board will actually deliver to the market. This isn’t speculative fluff—these are the hard metrics that define whether a commercial IoT deployment lives or dies on the factory floor.

| Hardware Metric | Classic Arduino (Portenta H7) | Standard Raspberry Pi 4 | Projected Qualcomm-Arduino Edge |

|---|---|---|---|

| Primary Architecture | Dual Core ARM Cortex-M7/M4 | Quad Core ARM Cortex-A72 | Custom Snapdragon with Hexagon NPU |

| Machine Learning Focus | TinyML (Very lightweight) | CPU-bound (High latency) | Hardware-accelerated INT8/FP16 |

| Active Power Draw | ~150 – 200 mW | ~3.4 – 5.0 Watts | ~400 mW (During heavy inference) |

| Vision Processing | Low-res, sub-10 fps | 1080p, CPU bottlenecked | 4K ISP, real-time object detection |

| Wireless Connectivity | Wi-Fi / Bluetooth LE | Wi-Fi / Bluetooth | Integrated 5G / Wi-Fi 6E / NB-IoT |

Look closely at that table. The jump in active power draw from a classic microcontroller to a Raspberry Pi is massive—we’re talking watts versus milliwatts. A Raspberry Pi requires a hefty wall adapter or a massive lithium-ion battery bank. The projected Qualcomm-Arduino board sits right in the sweet spot. It gives you nearly the processing grunt of a single-board computer but keeps the power consumption low enough to run off a standard solar-charged battery setup in a remote agricultural field.

The “Edge AI” Mirage vs. Actual Implementation

Everybody loves talking about edge computing at conferences. The reality of deploying it is usually a bloody, frustrating mess.

I remember sitting in a stuffy conference room in 2022 listening to a vendor pitch a “smart camera” system for retail stores. They promised it would count foot traffic, analyze customer demographics, and detect shoplifting in real time. We bought a test unit. The moment we installed it, the latency was so bad it was identifying people three seconds after they walked out the door. The local processor was simply choking on the video feed.

Edge AI isn’t magic. It’s a brutally strict mathematical equation balancing memory bandwidth, thermal throttling, and model size.

When you acquire a company like Arduino, you aren’t just buying hardware. You are buying the abstraction layer. Arduino’s true genius has always been hiding the ugly, low-level register manipulations behind simple, human-readable functions. digitalWrite(LED_BUILTIN, HIGH) is vastly easier than writing hexadecimal memory addresses manually to a port register.

Qualcomm desperately needs that abstraction for AI. They need a function that looks something like AI.detectObject(cameraFeed) that handles all the complex tensor allocations, hardware acceleration routing, and memory management in the background. If they pull that off, the entire industry shifts overnight.

Framework: Building Your Next Smart IoT Device

If you want to survive this transition and actually build hardware that works in the real world, you need a highly specific methodology. You can’t just slap a pre-trained model onto a board and hope for the best. Here is the exact operational logic map my team uses when migrating legacy sensor networks to edge AI architectures.

- Define the Absolute Minimum Inference Requirement: Do you actually need to run a full object detection model, or would a simple audio classifier looking for a specific frequency spike do the job? Always default to the lightest possible mathematical model.

- Collect Data on the Target Hardware: Never train your model entirely on pristine, synthetic cloud data. You must collect your audio, vibration, or visual data using the exact cheap microphone or camera module that will be deployed in the field. Sensor noise ruins more AI models than poor coding.

- Quantize Aggressively: Convert your 32-bit floating-point weights to 8-bit integers (INT8). Yes, you will lose a tiny fraction of a percent in raw accuracy. You will also shrink your model size by 4x and speed up inference dramatically on an NPU. It is always a worthwhile trade.

- Profile the Power Spikes: Use a specialized power profiler to watch your board’s energy draw during the exact millisecond the NPU fires up. If your battery’s internal resistance can’t handle that sudden current spike, the board will reset randomly. This is the most common reason edge devices fail in the wild.

- Plan for Over-the-Air (OTA) Model Updates: Your model will drift. The environment will change. If you hardcode the neural network into the firmware without a secure OTA update path via Wi-Fi or NB-IoT, you are building expensive bricks.

That checklist isn’t theoretical. It’s written in the blood, sweat, and blown budgets of dozens of failed hardware prototypes.

Data Breakdown: What Happens to the Open Source Ethos?

This is the elephant in the room.

Arduino is practically a religion for open-source hardware purists. The schematics are public. The bootloaders are accessible. The community thrives on the idea that you own the hardware you buy. Qualcomm, conversely, is fiercely protective of its intellectual property. They lock down their baseband processors with military-grade encryption and heavily restrict access to their proprietary AI toolchains.

Can these two cultures coexist?

It’s a massive friction point. If Qualcomm forces Arduino developers to sign NDAs just to access the NPU documentation, the community will revolt instantly. They will pack up their code and migrate to Espressif’s ESP32 platform or RISC-V alternatives before the ink on the acquisition contract even dries.

But let’s look at the historical precedent. When IBM bought Red Hat for $34 billion in 2018, the tech world screamed that Linux was doomed. It wasn’t. IBM was smart enough to keep Red Hat isolated, letting them maintain their open-source credibility while quietly siphoning enterprise value in the background.

Qualcomm has to play the exact same game here. They need to release a tiered hardware approach. Give the maker community the schematics for the base boards. Open-source the AI abstraction layer. Keep the deepest, lowest-level silicon IP hidden, but make the interfaces completely transparent. If a high school robotics team can’t figure out how to use the new chips without a corporate license, the acquisition is a failure.

The Supply Chain Angle Nobody is Talking About

There’s another massive layer to this that most software-focused analysts completely miss: manufacturing logistics.

If you tried to build a custom hardware product anytime between 2020 and 2023, you know exactly what I’m talking about. The chip shortage was brutal. We had clients redesigning their entire printed circuit boards (PCBs) three times in six months just to accommodate whatever random microcontrollers were actually in stock at Digi-Key or Mouser.

Arduino struggled during this period just like everyone else. They rely on third-party silicon from STMicroelectronics, Microchip, and Renesas. When those suppliers prioritize massive automotive contracts, the smaller players get starved out.

By absorbing Arduino, Qualcomm instantly solves this supply chain vulnerability for the edge computing market. Qualcomm has massive, priority agreements with foundries like TSMC and Samsung. They can guarantee silicon availability at a scale that Arduino could never achieve independently. For an enterprise engineer planning a rollout of 50,000 smart agriculture sensors, that guaranteed supply chain is actually more important than the AI specs.

You can’t deploy hardware you can’t buy, right?

Where Do Developers Go From Here?

The dust is still settling, but the trajectory is incredibly clear. The days of treating microcontrollers as dumb input/output switches are over. The edge is getting smart, and it’s getting smart incredibly quickly.

If you are a hardware hobbyist, this is the golden age. You are about to get access to silicon that was previously reserved for thousand-dollar smartphones, packaged in a friendly, thirty-dollar board. You’ll be able to build offline voice assistants, smart security cameras, and autonomous drones in your garage over a weekend.

If you are an enterprise IoT architect, your job just got fundamentally harder—and vastly more interesting. You can no longer blame hardware limitations for poor device performance. The compute power is there. The connectivity is there. The burden is now entirely on your ability to design efficient algorithms and manage the complex data orchestration between the edge and the cloud.

Start familiarizing yourself with quantization techniques. Move away from bloated Python scripts and get very comfortable with modern, memory-safe C++ or Rust for embedded systems. Understand how to profile power consumption at the microampere level.

The hardware barrier has officially been shattered. Qualcomm just bought the most important tollbooth on the road to edge AI. Now, we just have to figure out what to build with it.